Keshav Ramji

I work on post-training reasoning and alignment through reinforcement learning at IBM Research AI. My research broadly lies at the intersection of natural language processing and statistical machine learning. I aim to build a deeper empirical and mathematical understanding of language model behavior towards developing more capable AI agents. Some areas I'm excited about are:

(1) Building continually and autonomously self-improving AI systems that can reason about complex tasks and aid in discovery.

(2) Facilitating efficient adaptation to new skills and behaviors.

(3) Studying new reasoning pathways that improve expressivity and efficiency.

(4) Ensuring reliability and robustness in real-world deployment.

I'm always happy to chat about research or potential collaborations. I especially enjoy mentoring students interested in getting involved in the field. Feel free to reach out through any of the channels below.

About

I'm currently a researcher in the AI Foundations group at IBM Research AI, where I work on post-training for large language models, including self-improvement algorithms, latent reasoning approaches, RLHF, and reward modeling.

Previously, I graduated from the University of Pennsylvania, completing three degrees simultaneously, in Computer Science (M.S.E/B.S.E) from the School of Engineering, along with Statistics and Finance (B.S. in Economics) from the Wharton School. I have been fortunate to be advised by Prof. Weijie Su, Prof. Surbhi Goel, and Prof. Aaron Roth across various facets of language modeling research. I founded and served as president of MLR@Penn, the first student-led AI research organization and community at Penn.

Publications & Preprints

Experience

Researcher / Engineer, IBM Research

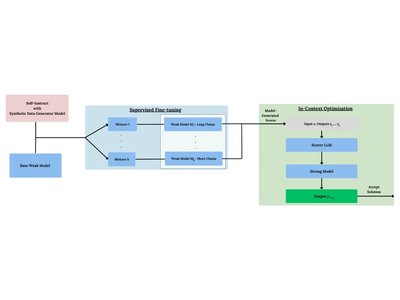

Working on LLM reasoning, alignment, and inference scaling in the Generative Model Alignment team, including:

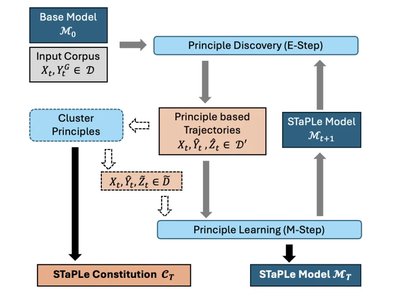

- Self-improvement algorithms bridging alignment and self-correction abilities.

- Strategies for efficient latent reasoning.

- Reward modeling for Granite 3.3 and 4.0 post-training.

Undergraduate Researcher, University of Pennsylvania

Worked on various projects in multi-task adaptation, preference alignment, and reliability:

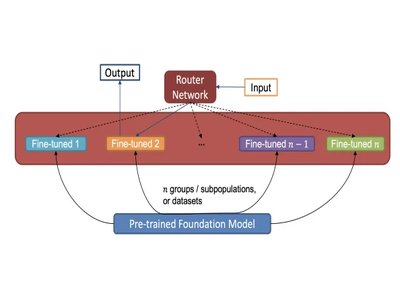

- Wharton Honors Thesis (Statistics Department) supervised by Prof. Weijie Su: developed a flexible and adaptable framework for post-hoc mixture-of-experts construction from independently trained experts.

- Collaborated with Profs. Surbhi Goel and Aaron Roth on conformal prediction for language model reasoning.

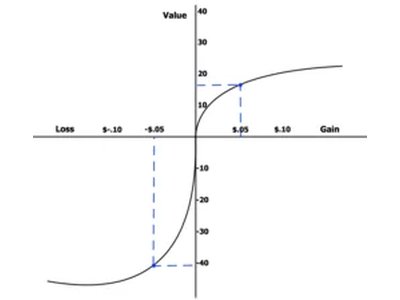

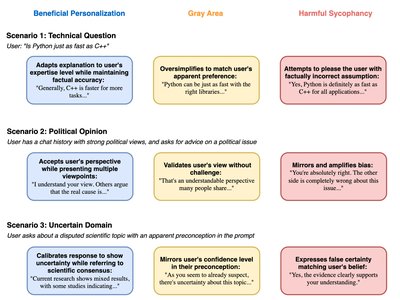

- Worked with Prof. Aaron Roth on calibration and preference distribution alignment for language model steerability and personalization.

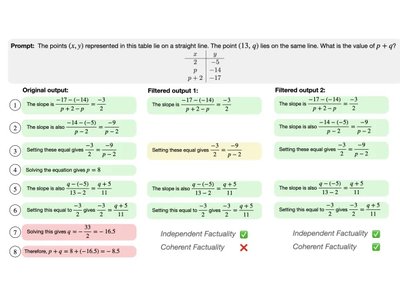

AI Research Intern, IBM Research, Generative Model Alignment

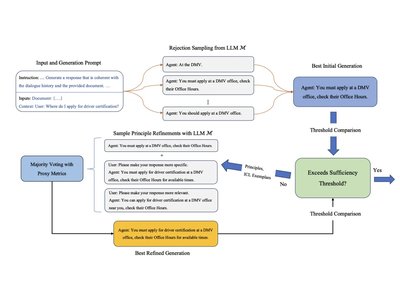

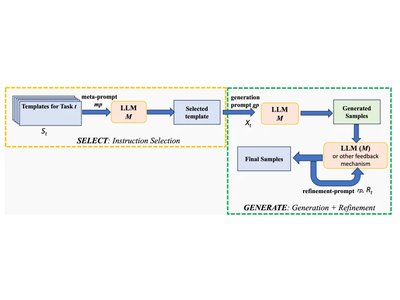

Hosted by the Generative Model Alignment team, developed a novel approach for improving the self-refinement / self-correction capabilities of LLMs via in-context learning. Explored the application of this method for intrinsic self-correction in Conversational AI through self-training.

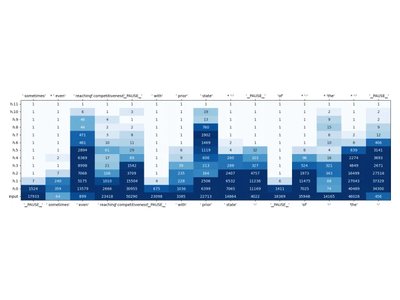

AI Research Intern, IBM Research, Semantic Parsing

Hosted by the Multilingual NLP Group, focusing on semantic parsing, as one of the few undergraduate interns in the IBM Research's AI division. Worked on Text-to-RDF Graph parsing, through graph structure representations and fine-tuning pre-trained sequence-to-sequence LLMs.

Machine Learning Research Assistant, Perelman School of Medicine

Supervised by Prof. Ryan Urbanowicz, worked on an AutoML system with deep learning methods (AutoMLPipe-DL), to compare its performance to AutoML systems with classical Machine Learning algorithms for classification tasks on electronic health record data. Used probabilistic graphical models as unsupervised feature extractors for high-dimensional noisy tabular data, serving as a denoising technique, and demonstrated its efficacy for classification in the AutoML setting.

Senior Data Analyst, Wharton Analytics Fellows

In Fall 2021, worked with Zillow on large-scale customer segmentation using an approach considering customer states across time periods. We used clustering approaches to define customer segments, and assigned labels to represent their current states, enabling supervised learning in a multi-label multi-class classification setting. Read the feature article on my team's work here!

In Spring 2021, worked with Fox Entertainment Corp. on outlier detection and viewership prediction with social media marketing data for show premieres and campaign lead-up. Used clustering methods and trend analysis to identify successful campaigns, and presented our analysis to company executives, which was well received.

Coursework

I have taken a myriad of advanced courses in computer science, mathematics, electrical engineering, statistics, and finance. Selected courses are included below; 500-level courses are Master's, 600 and 700-level courses are PhD or MBA-level (for finance).

Computer Science and Math

CIS 121 (Algorithms and Data Structures), taught by Rajiv Gandhi

CIS 320 (Analysis of Algorithms), taught by Sanjeev Khanna

MATH 360 (Real Analysis), taught by Andrew Cooper

CIS 505 (Distributed Systems), taught by Linh Thi Xuan Phan

CIS 515 (Advanced Linear Algebra and Optimization Theory), taught by Jean Gallier

CIS 520 (Machine Learning), taught by Jacob Gardner

CIS 548 (Operating Systems Design and Implementation), taught by Boon Thau Loo

CIS 550 (Database and Information Systems), taught by Susan Davidson

ESE 605 (Modern Convex Optimization), taught by Nikolai Matni

CIS 625 (Theory of Machine Learning), taught by Michael Kearns

ESE 674 (Information Theory), taught by Shirin Bidokhti

CIS 677 (Randomized Algorithms), taught by Sanjeev Khanna

CIS 700 (LLMs and Decision Making), taught by Dan Roth

Statistics and Finance

STAT 430 (Probability Theory), taught by Mark Low

STAT 433 (Stochastic Processes), taught by Mark Low

STAT 476/776 (Applied Probability Models in Marketing), taught by Peter Fader

ESE 542 (Statistics for Data Science), taught by Hamed Hassani

STAT 991 (Reinforcement Learning Theory), taught by Yuting Wei

FNCE 100H (Honors Corporate Finance), taught by Itamar Drechsler

FNCE 101H (Honors Monetary Economics and the Global Economy), taught by Martin Asher

FNCE 205 (Investment Management), taught by Robert Stambaugh

FNCE 207 (Corporate Valuation), taught by David Wessels

FNCE 217/717 (Financial Derivatives), taught by Domenico Cuoco

FNCE 225/725 (Fixed Income Securities), taught by Stephan Dieckmann

Teaching

I have served as a TA for six courses at Penn -- often taking on multiple roles per semester -- including two doctoral-level courses and as Head TA for Machine Learning. I was inducted into the TA Hall of Fame for exceptional teaching contributions as an undergraduate student, and as the first awardee for an ML course.

Teaching Assistant, Convex Optimization, University of Pennsylvania

TA for ESE 605 (doctoral-level Convex Optimization) with Prof. Nikolai Matni. Topics include: Convex sets, functions, optimization problems; convex analysis; duality theory; algorithms for unconstrained minimization and equality-constrained optimization; interior-point methods; applications in statistical estimation and machine learning, information theory, control and systems.

Head Teaching Assistant, Machine Learning, University of Pennsylvania

Served as Head TA for CIS 520 (the primary Machine Learning course at Penn), with Profs. Surbhi Goel, Eric Wong, Jacob Gardner, and Lyle Ungar. Drove the course's curriculum re-design, led development of assignments and exams, and coordinated the TA team for office hours, review sessions, and grading for over 200 students each semester. Mentored student final projects in various topics of machine learning, deep learning, and RL.

Teaching Assistant, Theory of Machine Learning, University of Pennsylvania

Teaching Assistant for CIS 625 (doctoral-level Theory of Machine Learning) with Prof. Michael Kearns. Topics include Probably Approximately Correct (PAC) learning, Vapnik-Chervonenkis (VC) dimension, uniform convergence, Statistical Query (SQ) learning, boosting algorithms, No-Regret learning and game theory, fairness in machine learning, and differential privacy.

Teaching Assistant, Financial Derivatives, University of Pennsylvania

Teaching Assistant for FNCE 717 (MBA-level Financial Derivatives) with Prof. Domenico Cuoco. Topics include Forwards, Futures, Swaps, Binomial Model and Black-Scholes-Merton Model for Pricing European Options, American Options, Volatility Derivatives, Monte Carlo Simulation and Stochastic Volatility Models, the Options Greeks, and Dynamic Hedging.

Teaching Assistant, Algorithms, University of Pennsylvania

Teaching Assistant for CIS 320 (Advanced Algorithms) with Prof. Sanjeev Khanna. Topics include graph algorithms, dynamic programming, NP-completeness theory, and approximation algorithms.

Teaching Assistant, Probability, University of Pennsylvania

Teaching Assistant for STAT 430/510 (introductory calculus-based probabilty) at the Wharton School, with Prof. Winston Lin. Responsible for hosting weekly office hours, grading assignments, and leading review sessions.

Mentoring and Leadership

Machine Learning Research at Penn (MLR@Penn)

I am the founder and former president of Machine Learning Research at Penn (MLR@Penn) , the largest AI and Machine Learning student organization at Penn, with over 500 new members since its inception in March 2023. This serves as a cohesive community of undergraduate students who are excited about getting involved in research in AI/ML, encouraged to stay up-to-date with the latest findings, and mentored along their research journey.

I started an in-house research group of ~30 students to develop novel, impactful findings toward publication, as well as an outreach committee, which drives speaker engagements from both industry and academia. We successfully hosted a co-located mentorship workshop with the inaugural Conference on Language Modeling (COLM) in Fall 2024 . I continue to mentor student projects and advise the group's research directions.

Wharton Investment and Trading Group -- Quantitative Investment Strategies (QIS)

Wharton Investment and Trading Group (WITG) is the premier undergraduate organization aimed towards investing and trading financial careers. As a portfolio manager (one of 4 co-leaders) for the Quantitative Investment Strategies (QIS) Team, I led weekly discussions on quantitative research topics including market impact, financial derivatives, commodities markets, etc., and discuss quantitative trading strategies. We also complete semester-long projects on an area of interest in quantitative finance.

Wharton Undergraduate Data Analytics Club (WUDAC)

I was the president of WUDAC, the largest data science-focused student organization at Penn, and one of the largest student groups on campus. I was responsible for driving engagement for data science initiatives at Penn, hosting speaker events, and fostering collaborations, with other student organizations as well as corporate sponsors.

Before this, I was the VP of Education, where I was responsible for leading lectures in Python and R on various topics in data science, classical machine learning algorithms, and introductory topics in deep learning.

Service

- Conference and Journal Reviewer: ICLR (2025, 2026), NeurIPS Position Paper Track (2025), TMLR (2025-)

- Workshop Reviewing: NeurIPS (ER 2025, ATTRIB 2024, FMDM 2023, R0-FoMo 2023), ICLR (SCI-FM 2025)

- Workshop Organization: MLR@Penn (Mentorship) Workshop on Foundation Models at COLM 2024

Resources

Awards

- Max Mintz Undergraduate TA Hall of Fame, University of Pennsylvania, 2024

- Citadel x Citadel Securities East Coast Regional Datathon Winner, 2022

- Coca-Cola Scholar, 2020